AI is not rewriting the cyber playbook. It is making the old one run at machine speed.

For security leaders, the wrong question still dominates the AI-and-cyber conversation. Too much attention is going into whether AI is creating completely new forms of attack. The stronger public evidence points somewhere else. AI is making familiar attacks markedly faster, cheaper and easier to scale. The NCSC says the near-term threat comes from the enhancement of existing tactics and procedures. Microsoft says attacker objectives have not changed, but tempo, iteration and scale have. Wiz reaches a similar conclusion in cloud security: AI influenced attack activity in 2025 by enabling threat-actor workflows and expanding attack surfaces, not by introducing fundamentally new cloud attack techniques. That matters because CISOs do not need a new theory of cyber risk. They need an operating model that can cope with old tradecraft moving at machine speed.

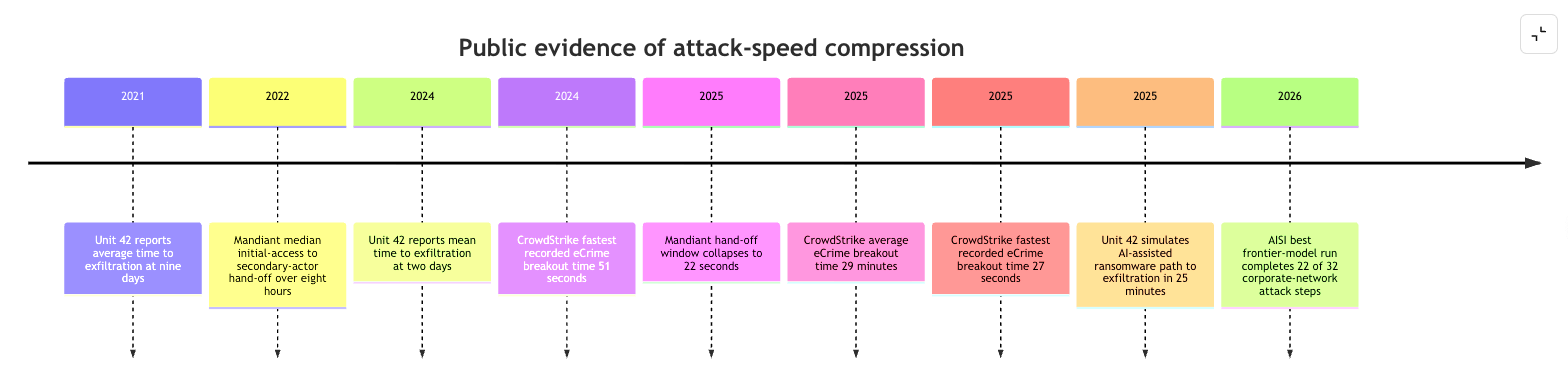

The speed compression is no longer theoretical. Mandiant reports that the median time from initial access to hand-off to a secondary threat group fell from more than eight hours in 2022 to just 22 seconds in 2025. CrowdStrike reports an average eCrime breakout time of 29 minutes in 2025, with the fastest observed at 27 seconds. Palo Alto Networks Unit 42 says the average time from compromise to exfiltration fell from nine days in 2021 to two days in 2024, and that one in five incidents reached exfiltration in under an hour. In Unit 42’s own AI-assisted simulation, a ransomware path from compromise to exfiltration took 25 minutes. Those figures are not interchangeable metrics, but together they show a clear direction of travel: the time defenders have to recognise, decide and contain is collapsing.

Where is AI actually helping attackers today?

The strongest evidence is concentrated in the parts of the attack chain that used to require time, fluency and repetition. Microsoft says AI-enabled phishing click-through rates can reach 54%, compared with roughly 12% for more traditional campaigns. Google Threat Intelligence Group says state-backed and criminal actors are using AI for technical research, target development, lure creation, malware development and post-compromise activities. It also says it has not yet seen a breakthrough capability that fundamentally changes the threat landscape. In other words, the commercial and operational payoff is not that AI has discovered a magical new intrusion path. It is that AI removes friction from reconnaissance, persuades more people to click, helps attackers code and debug more quickly, and speeds up the conversion of stolen access into follow-on actions.

That distinction matters because it keeps the defensive response grounded. ENISA’s latest threat landscape still finds phishing to be the dominant identified initial infection vector, accounting for roughly 60% of the cases it analysed, with vulnerability exploitation at 21.3%. M-Trends 2026 likewise says exploits remained the most common initial infection vector, while highly interactive voice phishing surged to 11% and became the second most observed vector. This is the pattern security leaders should be planning against: classic entry points, accelerated by AI, with identity abuse and social engineering doing much of the heavy lifting. The same old weaknesses still matter—only faster now.

The UK retail incidents in 2025 are instructive for exactly that reason. Public statements from M&S and Co-op show meaningful customer-data and operational impact, but the more important signal came from the NCSC’s recommendations to the wider sector. It urged organisations to deploy MFA comprehensively, monitor risky logins and unauthorised account misuse, review the legitimacy of privileged access, strengthen helpdesk password-reset verification and consume TTP-driven intelligence quickly. That is not the guidance you issue when the main problem is science-fiction malware. It is the guidance you issue when identity abuse, social engineering and fast operational pivoting are the real concern. The public evidence does not prove AI caused those incidents. But it does show that the most consequential retail breaches are increasingly compatible with an AI-enhanced access model.

Google/Mandiant’s work on ShinyHunters-branded SaaS extortion makes the identity lesson even clearer. These actors used sophisticated voice phishing and victim-branded credential-harvesting pages to obtain SSO credentials and MFA codes, then pivoted into SaaS platforms to exfiltrate sensitive data and internal communications. Mandiant is explicit that this was not the result of vulnerabilities in the vendors’ products or infrastructure. It was social engineering plus identity abuse, executed effectively. Its defensive conclusion is correspondingly practical: move towards phishing-resistant MFA such as FIDO2 keys or passkeys wherever possible. That is what a speed-aware mitigation looks like. It does not try to outpredict every future lure. It makes the attacker’s fastest route materially harder to use.

Practitioner reporting from CRN and ChannelWeb reinforces the same conclusion in plain language. ChannelWeb, writing for the UK channel, says ransomware now moves in minutes rather than days, and that attackers are still breaking in using the same methods they used five years ago. CRN reports that many experts now believe organisations will have to automate more security decisions, because there is no realistic way for human-powered response to keep up with machine-powered attacks. That is an uncomfortable shift for many security teams, especially where false positives can shut down real business activity. But it is also an unavoidable one. If the attacker’s time advantage keeps growing, response engineering becomes as strategic as prevention.

Where should a CISO focus first?

The best near-term control family is exposure management, because it tackles the exact weaknesses AI helps attackers find and chain faster. Attack-path analysis and exposure mapping shift attention away from raw vulnerability volume and towards the small set of externally initiated, exploitable paths that could actually lead to critical assets. Microsoft’s exposure-management and Defender for Cloud documentation frames this explicitly in terms of attack paths that begin outside the organisation and progress toward vital assets. Wiz’s cloud retrospective supports the same prioritisation from a threat perspective: the attacker still starts with familiar weaknesses such as vulnerabilities, exposed secrets and misconfigurations. When attack speed is the problem, less noise and better prioritisation matter more than ever.

The second priority is identity. Phishing-resistant MFA, stricter service-desk verification, tighter privileged-access governance and ITDR capabilities all slow the shortest path from initial access to business impact. CISA calls phishing-resistant MFA the most secure form of MFA because weaker methods remain vulnerable to phishing, push-bombing and SIM-swap style bypass. NCSC’s retail guidance put helpdesk workflows and admin-account legitimacy near the top of the response list. CrowdStrike’s identity-security material now markets ITDR in explicitly operational terms: stop identity-based attacks in real time, correlate identity and endpoint signals, and reduce lateral movement. In a world of faster phishing, faster vishing and faster token abuse, identity becomes the speed governor.

Detection and response then have to be reworked for machine-time assistance. The main opportunity today is not to hand every incident to an autonomous agent and hope for the best. It is to automate the bounded, repetitive, high-volume tasks that consume analyst time and delay action. Microsoft’s Phishing Triage Agent is a good illustration. Microsoft says it reduces repetitive investigation work and accelerates response; in production reporting, the company says it enabled up to 78% faster triage, 77% more accurate verdicts and 6.5 times more malicious emails identified. Palo Alto Networks frames Cortex XSOAR and XSIAM around the same operating need: automate workflows, centralise intelligence, and accelerate incident remediation. The more the attacker compresses time, the more defenders need consistent playbooks executed at scale.

Continuous validation and deception are increasingly important because they give defenders proof and signal before a fast-moving intrusion reaches its objective. Validation platforms from SafeBreach, Cymulate and Pentera are built around the idea that not every finding matters equally; the priority is to identify what is actually exploitable and whether controls stop realistic attack paths in production. Deception complements that by creating high-fidelity early-warning alerts. Thinkst’s Canarytokens are a good example of a simple, cheap control that can reveal identity compromise or credential misuse early. The academic frontier is moving too: the USENIX paper Cloak, Honey, Trap proposes LLM-specific deception and counterattack techniques that can disrupt, detect or neutralise malicious agents. When attackers automate, they often become easier to steer into prepared tripwires.

The next defensive shift is already visible. DARPA’s AI Cyber Challenge demonstrated autonomous systems securing critical open-source software. DeepMind says CodeMender has already upstreamed dozens of security fixes to open source projects. OpenAI says Codex Security is designed to reduce noise by validating findings and surfacing higher-confidence issues with fixes. AISI’s cyber-range research shows frontier models are improving materially on multi-step corporate-network attack scenarios, with the best run completing 22 of 32 steps. The implication is not that defenders should panic. It is that they should prepare for a near-term world in which AI halves the time taken for both attackers and defenders, but only where organisations have the data, workflows and automation discipline to exploit that advantage.

The practical conclusion for CISOs is simple. AI is not replacing cyber fundamentals. It is increasing the penalty for weak ones. The organisations that will cope best are not just the ones buying the loudest “AI security” branding. They are the ones that can demonstrate five things: exploitable exposure is falling, identity abuse is harder, high-volume SOC work is increasingly automated, controls are continuously validated, and recovery remains possible even when an attacker moves far faster than a human analyst can. In the current environment, cyber resilience is becoming a test of whether the defender can close the velocity gap before a familiar intrusion path turns into a machine-speed business crisis.

Recommendations and suggested structure

For most security leaders, the most pragmatic near-term programme is sequential rather than sprawling. Start by hardening identity workflows and service-desk verification, because that removes the fastest access path. Then move vulnerability and posture work towards exploitability and attack-path context, because AI is helping attackers prioritise the same reachable weaknesses. After that, automate bounded response cases in the SOC, expand SaaS and cloud telemetry, and validate continuously that the controls still work after every material change. That ordering is consistent with NCSC’s retail guidance, Mandiant’s SaaS-theft analysis, Microsoft’s operational framing and the speed data from CrowdStrike and Unit 42.

A clean structure for publishing this as a CISO-facing blog would be:

Set the frame: AI is not rewriting the playbook; it is accelerating the old one.

Show the evidence: use the hours-to-minutes-to-seconds timeline and one or two hard metrics.

Ground it in incidents: use UK retail and SaaS identity-theft cases to show why this matters now.

Explain where AI adds speed: phishing, recon, exploit matching, malware iteration, stolen-data triage.

Translate into controls: exposure management, phishing-resistant identity, automated response, validation.

End with the strategic implication: cyber risk is increasingly a speed problem, not just a tooling problem.